Reconstruction-Free Classification for Lensless Imaging Systems

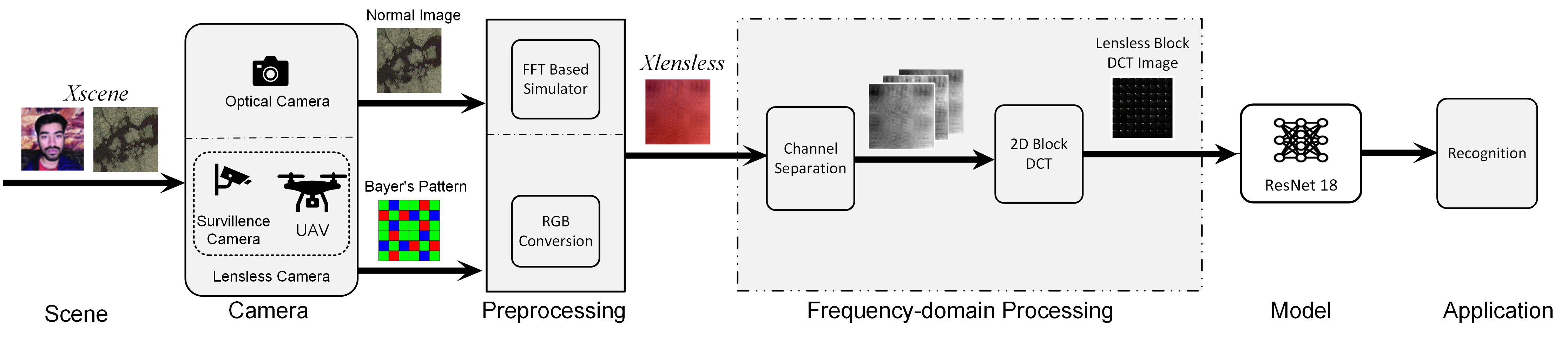

Learning semantic information directly from lensless sensor measurements without first reconstructing a human-viewable image.

I am a research engineer and PhD candidate working at the intersection of machine learning, computational imaging, and privacy. My research focuses on building learning systems that operate directly on encoded sensor measurements rather than human-viewable images.

Before my PhD, I built production systems from the ground up, as a software engineer, team lead, engineering manager, and backend solutions architect. I have led teams, designed backend and data platforms, built cloud pipelines, and scaled systems across healthcare, insurance, analytics, and research environments. That is the part I bring into research: I like hard problems, but I also like building the complete system around them.

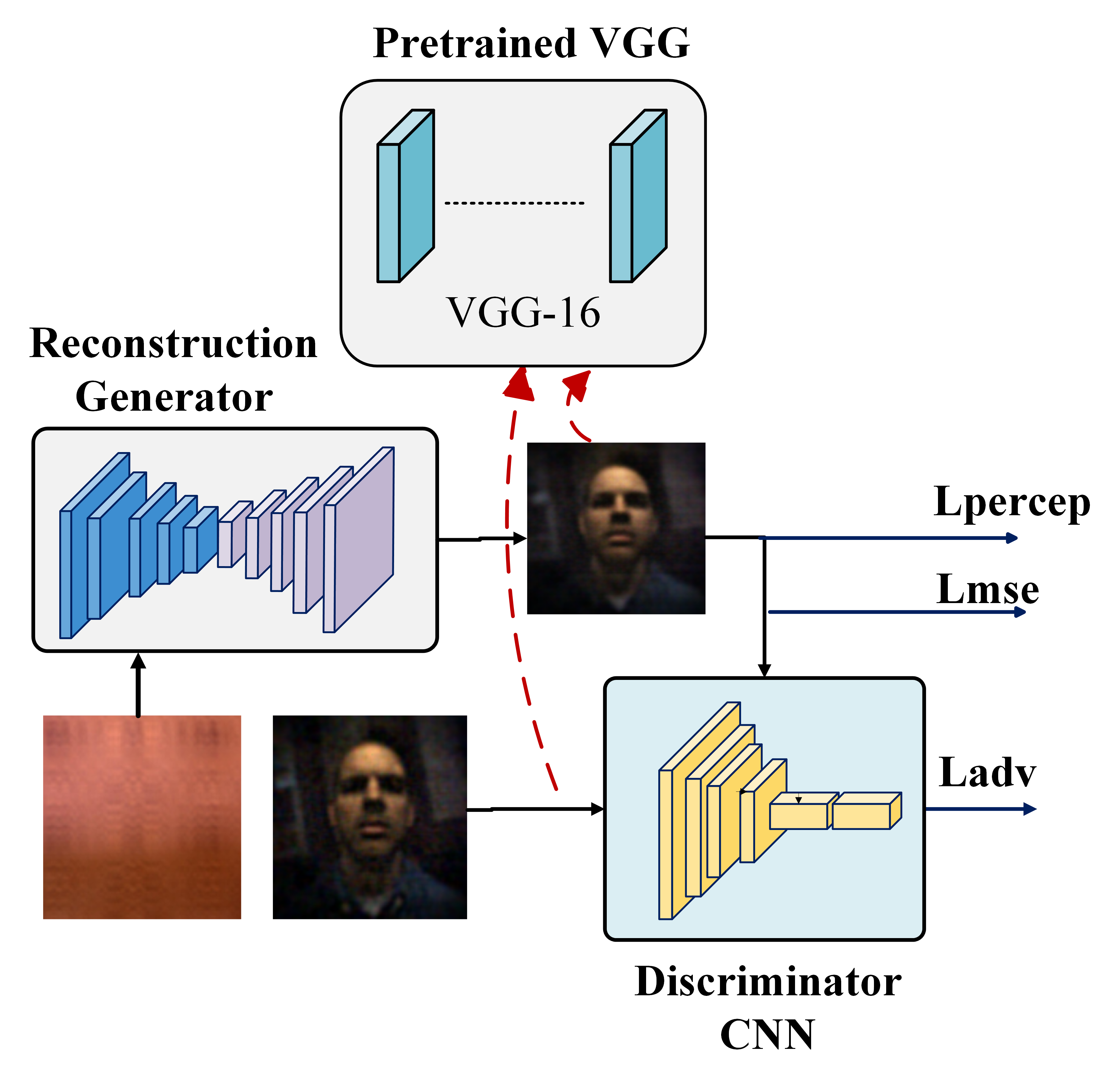

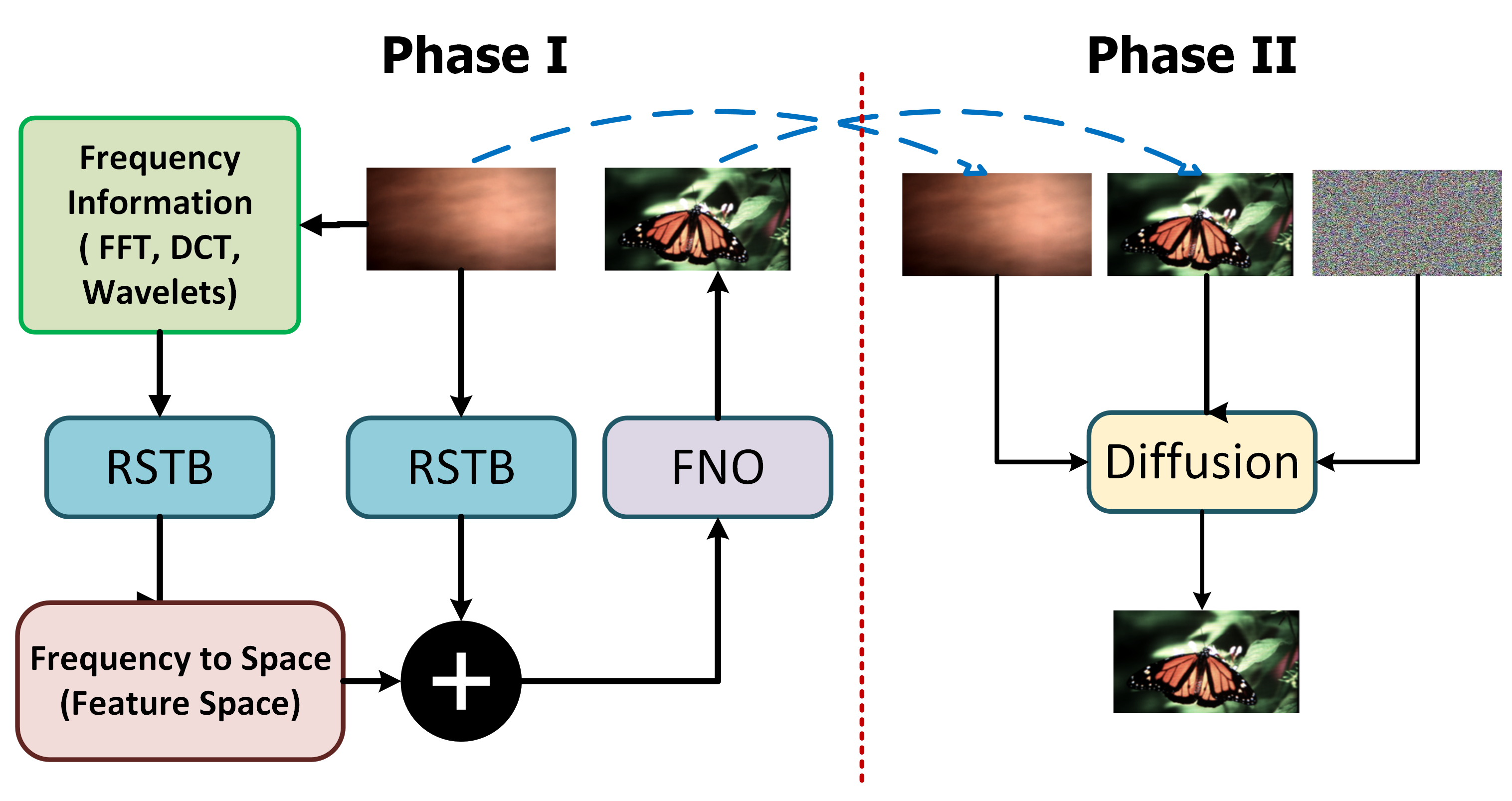

A research overview of the full lensless privacy pipeline.

Learning semantic information directly from lensless sensor measurements without first reconstructing a human-viewable image.

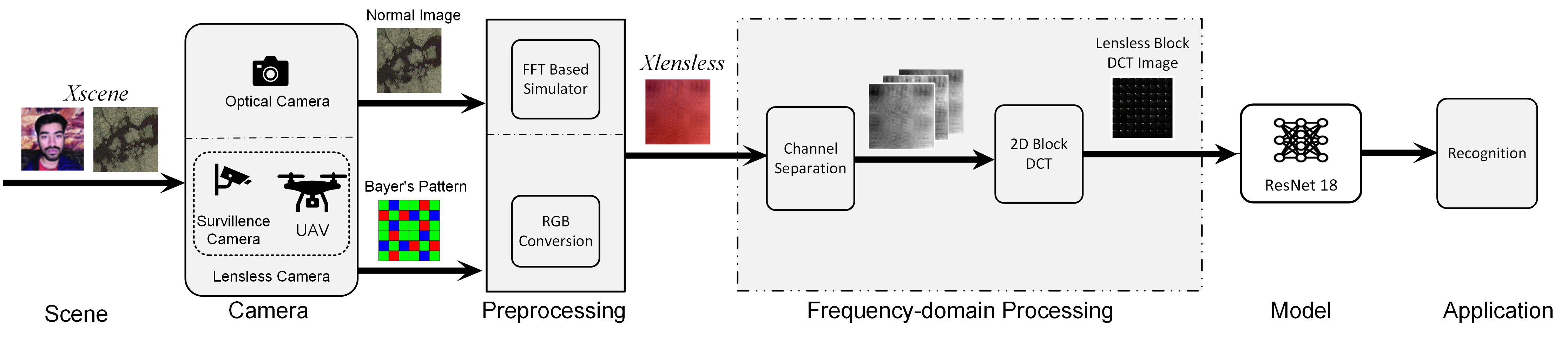

Finding a way to communicate lensless images while preserving privacy and defending against reconstruction threats — hiding full diffraction representations inside natural RGB containers.

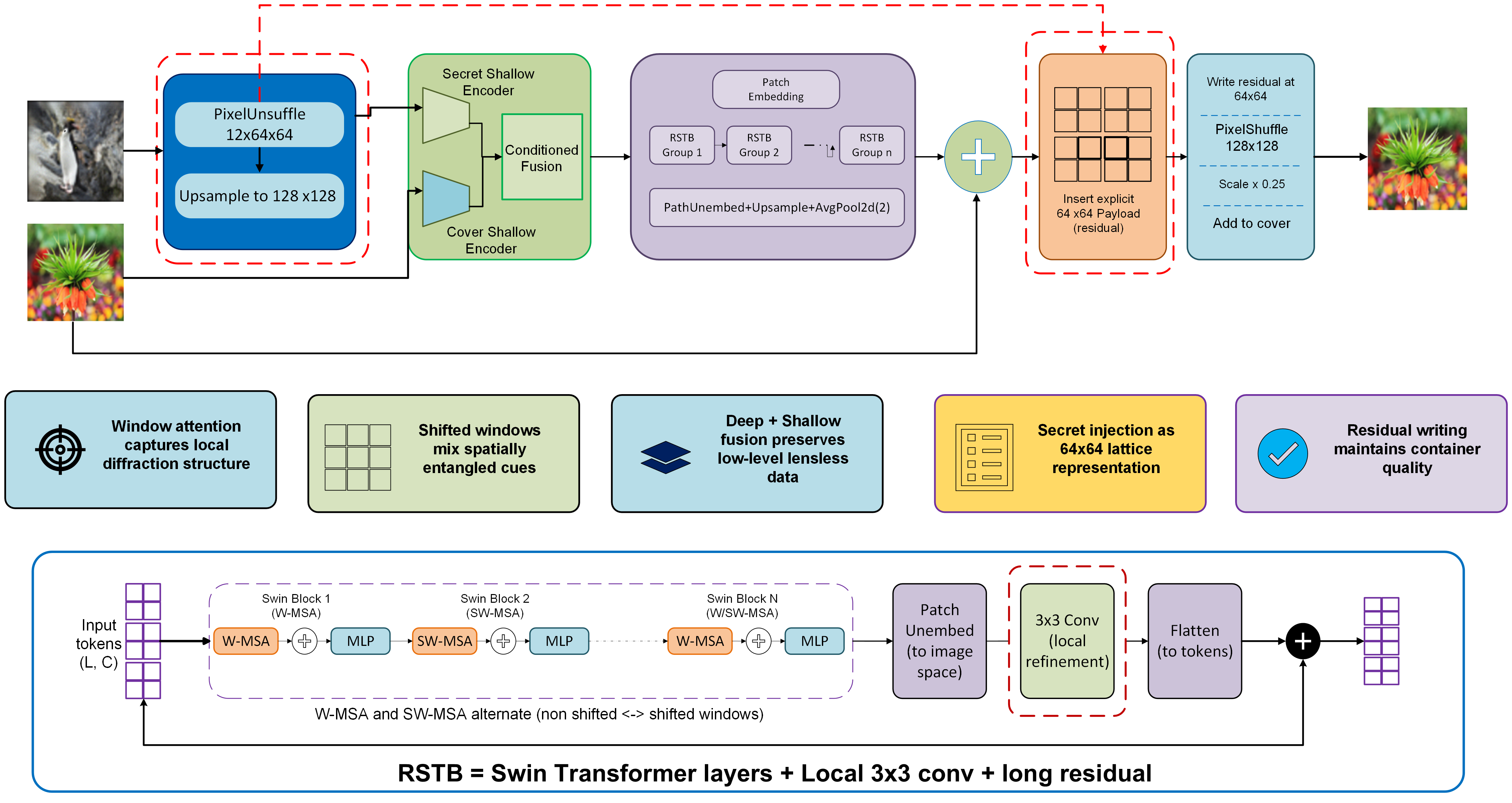

Finding the tradeoff between visual reconstruction risk and semantic understanding in lensless classification systems.

Interpreting lensless diffraction measurements using optical forward models, PSF structure, and physics-guided learning.

Sumit Bhattarai, Pramil Paudel, Zhu Li, Bo Luo, Fengjun Li

Pramil Paudel, Fengjun Li

Pramil Paudel, Bo Luo, Fengjun Li

Pramil Paudel, Daniel Tapia Takaki

Bikal Adhikari, Pramil Paudel · Heritage Publication, Kathmandu

Deep learning, CNNs, GANs, Transformers, reconstruction models, privacy-preserving ML, adversarial robustness, and classical ML.

Backend development, Java frameworks, cloud computing, experiment automation, and scalable code organization.

Large-scale data processing, search, and analytics infrastructure.

Electronics background with hands-on experience in microcontrollers, digital logic, and circuit design.

University of Kansas, USA. Research focus: privacy-preserving computer vision and lensless imaging.

Tribhuvan University, Nepal.

Python Programming · Object-Oriented Programming · Data Structures and Algorithms · Digital Logic

Software Engineering I · Software Engineering II

Microcontrollers and Computer Organization · Web Application Frameworks (Groovy on Grails, Spring Boot, Java Servlets) · Machine Learning Fundamentals, Version Control, and Reproducibility

Secretary — International Nepali Literature Society (2024–2026)

President — Nepalese Student Association (2021–2022)

President — Engineering Literature Forum (2012–2014)

Teaching assistant across multiple CS courses. Capstone project supervision. Workshop organizer for ML fundamentals and reproducibility.